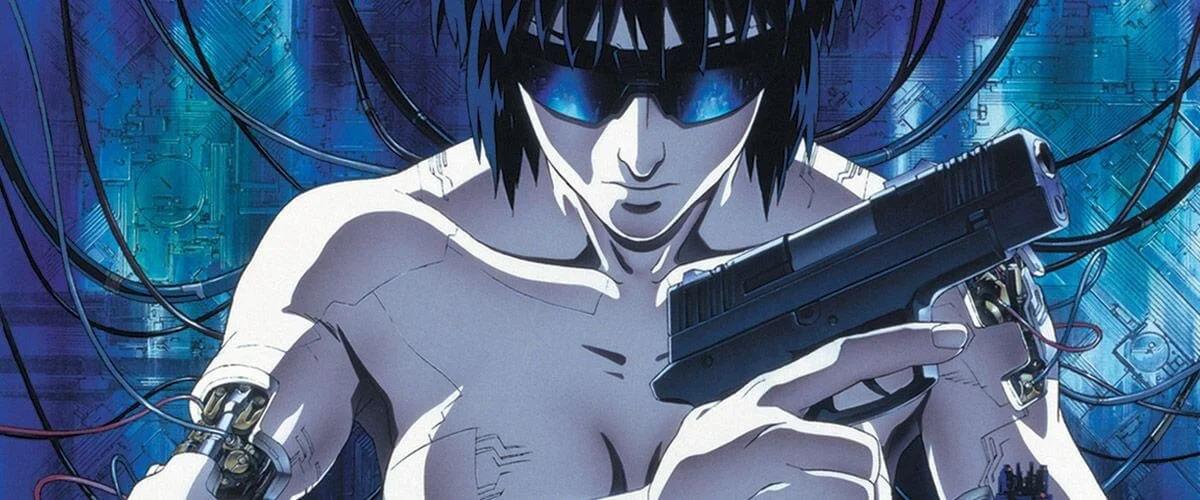

UX & AI: Ghost in the Prompt

For decades, designers aimed to do one thing reduce friction between the users and the machine. We built clear affordances, menus and buttons. The user clicked, dragged, typed and it exactly what you wanted.

Then came AI. Suddenly the machine doesn't want you to know the steps. It just needs to know your intentions. You don't click "Filter > Blur > Gaussian." You say "make the background blurry." The machine fills in the gaps. And it works like Magic.

Where as manual web or app interface asks something of you. It says: prove to me that you know what you want do. The prompt interface asks nothing. It just says: a guess is good enough?

This changes the designer's job. You're no only arranging buttons. You're crafting a fluid conversation between what the user wants and what the machine can do between two radically different modes of thought patterns.

Manual mode is for precision. The user wants surgical control. They want to feel the weight of each decision. The interface should be transparent, predictable, and fast a tool, not a collaborator.

Prompt mode is for exploration. The user doesn't know the exact path. They want to describe a destination and see what comes back. The interface should be forgiving, generative, and slightly magical — a partner, not a tool.

GEN-Z “the ipad generation“

The magic of the iPad is how it removed the file system the way the mouse removed the command line. Open an app. Do the thing. Close the app. The file just… exists. Somewhere. In the cloud maybe. You never choose a folder. You never hit "Save As." You never lose a document because you forgot where you put it — because you never put it anywhere in the first place.

Teachers now say Gen-Z kids raised on iPads don't know how to use a computer mouse. Put one in front of them? They lift it off the pad. They struggle to double-click. They reach for the screen as if it should just respond to their fingers.

It's easy to laugh. But it also makes you realize: the mouse feels like ancient history to them. A proxy. An awkward translation layer between their intent and the machine. They grew up with touch a finger to glass interface. No middleman.

iPad as an important milestone in UX design.

Here's what was lost: the mental model of a cabinet. Folders inside folders. Naming things so you can find them later. A sense that your stuff lives somewhere specific. " What's a computer? " wasn't just kids being cute. That was honesty. A computer, to them is box where you organize things. But a iPad is a thing that just does what you want.

Here's what was gained: freedom from all that. No more deciding. No more filing. No more "wait, where did I save that?" The machine just remembers for you. Search, don't sort. Trust, don't organize.

Trust and Black Boxes

A manual interface is transparent. You know why something happened — because you made it happen. A prompt interface is a black box. The machine gives you an answer but not the reasoning. You have to trust it or fight it. Designers are now in the trust business more than the usability business.

The Ghost in the Prompt

In manual interfaces, you could undo. In prompt interfaces, you can regenerate. You type a prompt. The machine answers. You don't like it. So you hit regenerate. And again. And again. Each time, something new comes back. Different words. Different assumptions about what you meant. Every prompt carries baggage. The words you choose reveal what you assume. "Make it professional" assumes a shared definition of professional. "Write like a human" assumes the machine knows what a human sounds like. The interface isn't neutral. It's a mirror.

Feedback Loops That Change You

You prompt. The machine answers. You adjust. The machine learns. But here's the quiet part: you learn too. You start writing prompts the way the machine likes. You change your voice to match its ear. The interface isn't just reflecting you anymore. It's training you to be more predictable. Who's training whom? We're already training ourselves again.

A new kind of game. Not a game of muscle memory. Speed. Precision. But a prompt game where mastery is articulation. Clarity of intent. The ability to say what you want so well that the machine has no choice but to obey.

We laughed at the generation that had to memorise DOS commands as we call this progress. And it is. But progress has never meant freedom from translation.

1. Manual Interface

You tell the machine exactly what to do, step by step. The interface is just a tool — buttons, sliders, menus. You have to know how to do something, not just what you want.

Your job: Operator. You drive.

Machine's job: Obey. No guessing.

Like: A car dashboard or a calculator.

Example: Clicking "Save As…" and picking a folder.

One line: You define the path. Machine follows it.

Good: Precise, predictable, you're in control.

Bad: Hard to learn. Breaks if you miss a step.

2. Prompt Interface

You tell the machine what you want, not how to do it. The interface is just language. The machine figures out the steps.

Your job: Director. You say the goal.

Machine's job: Interpret and generate.

Like: Talking to a smart assistant or giving a brief to a designer.

Example: "Write a thank-you email" or "Explain this like I'm 10."

One line: You name the destination. Machine finds the path.

Good: Easy to start. Handles new stuff. Creative.

Bad: Unpredictable. Hard to fix when wrong. Feels out of control.

That brings us to the bigger question: where do all these interfaces voice, touch, click, gesture actually sit in relation to each other? Let's line them up from farthest from the machine to closest.

Voice PROMPTING

You start far away. You can’t touch anything, so you just speak into the air. The system has to guess what you mean from your words alone. It often gets things wrong, but when it works, it feels like magic because you didn’t have to lift a finger.

You say, the source interprets.

Low effort for you, high ambiguity for the machine.

Distance: Very far. Your intention travels through language, ambiguity, and statistical inference.

Translation layer: Speech recognition → language model → intent parsing → action generation.

Philosophy: You speak at the source. The source listens, interprets, and acts on your behalf.

User’s burden: Low (articulation only). But misinterpretation is common.

Metaphor: Shouting a command across a room to a smart assistant. You don’t see the mechanics.

Core trade-off: High convenience, low control, high ambiguity.

“I say what I want. The source decides how.”

Air Gestures

A step closer. You’re still not touching anything, but now your whole body matters — a wave, a swipe in empty space. The system watches you like a conductor watching an orchestra. It’s cleaner than voice because motion is less ambiguous than language, but you still have to learn a silent choreography.

You move, the source watches and guesses.

Expressive but imprecise; no contact, no language.

Distance: Far, but physically expressive. No contact, no language — just motion in space.

Translation layer: Motion capture → pattern matching → intent mapping.

Philosophy: You show the source what you mean, but at a distance. The source watches and guesses.

User’s burden: Medium — you must learn a gesture vocabulary, but no physical fatigue of touching.

Metaphor: Conducting an orchestra from 10 feet away. Broad sweeps, no fine detail.

Core trade-off: Clean (no contact), dramatic, but low precision and high cognitive load for complex tasks.

“I wave. The source interprets my shape and speed as intent.”

Click INPUT

You’re now at medium distance. You hold a tool — mouse, trackpad — that acts as your proxy. You don’t touch the source directly, but your clicks land exactly where you point. No guessing, no interpretation. The system just waits for your clean, discrete signal. This is where control is highest, but you carry all the weight.

You aim through an instrument, the source obeys precisely.

Zero ambiguity, total user responsibility.

Distance: Medium. You are physically separated (cursor as proxy), but the action is discrete and deterministic.

Translation layer: Minimal (cursor position → coordinate → event). No inference, only measurement.

Philosophy: You operate a tool that touches the source for you. The source waits for a clean, precise signal.

User’s burden: Medium-high — requires hand-eye coordination, cursor control, and understanding of click types (left/right/double).

Metaphor: Using a long stick to press a button across the table. Not your finger, but predictable.

Core trade-off: High precision, zero ambiguity, but physically abstract and indirect.

“I point through an instrument. The source responds exactly where I aim.”

Touch

Much closer. Your finger meets the glass. No proxy, no distance. You drag, tap, pinch — and the source responds right where you touch. It feels natural because you’re finally making physical contact. But your finger blocks your view, and there’s no hovering to peek under the hood. Still, for most everyday things, this is home.

You touch directly, the source responds immediately.

Intuitive, embodied, but limited precision.

Distance: Near. Your body directly contacts the representation of the source.

Translation layer: Very thin (capacitance/pressure → coordinate → action).

Philosophy: You are the cursor. The source touches you back (haptics, UI response). No proxy.

User’s burden: Low-medium — intuitive for gestures (swipe, tap, pinch), but precision limited by finger size.

Metaphor: Pressing a physical button or dragging a real object on a table. Immediate, embodied, but no hover.

Core trade-off: High immediacy, intuitive for basic actions, but screen smudges, occlusion (finger blocks view), and no hover state.

“I touch the source directly. It responds where my skin meets glass.”

Conclusion

So the real design question isn't "AI or not AI." The best experiences let users slip between modes without thinking. Start with a prompt to generate. Switch to manual to refine. Prompt again to remix.

The kids with their iPads aren't broken. They just learned a different distance. And the mouse isn't dead. It's just an option. Truth is you never land on the right interface.

Sometimes you need to be far away shouting a command across the room, letting the machine do the heavy lifting. Sometimes you need to get close — finger on glass, cursor on a pixel because only you can make it exact.

The best design doesn't pick a winner. It lets you move. Close when you need control. Far when you trust the machine. And somewhere in the middle when you're still figuring it out.

Because the goal was never to interact with a system. It was to do what you meant to do.